Why Getting the AI Architecture Right Is a Make-or-Break Decision

Let’s be honest, the AI space right now feels like someone emptied a bag of alphabet soup onto the table. LLMs, RAG, Agentic AI, AI Agents… every week there’s a new buzzword, and every vendor seems to be selling a slightly different version of “the future.” If you’re a business leader, a product manager, or a startup founder trying to figure out which AI architecture is actually right for your next project, you’re not alone in the confusion.

Here’s the thing: picking the wrong AI approach isn’t just a technical inconvenience. It’s a business problem. You could pour months of development time and a significant budget into an LLM-based product, only to realize it couldn’t access your company’s proprietary knowledge base. Or you might over-engineer a simple use case with a complex agentic system when a well-tuned RAG pipeline would have done the job in a fraction of the time.

At IPH Technologies, we’ve helped over 100+ companies navigate exactly these decisions — and we’ve learned that the key to building smart AI products isn’t hype, it’s understanding. So let’s cut through the noise, break down each AI architecture clearly, and help you figure out which one (or which combination) is the right fit for your goals.

What Is a Large Language Model (LLM)?

Think of an LLM as an extraordinarily well-read entity that has absorbed an almost incomprehensible amount of text — books, articles, websites, research papers — and learned to predict, understand, and generate language with startling fluency. Models like GPT-4, Claude, Gemini, and LLaMA are all LLMs at their core.

But what does “large” actually mean here? It refers to the number of parameters — the internal weights that the model learns during training. Modern LLMs contain anywhere from 7 billion to over 1 trillion parameters, which give them their remarkable ability to reason, write, summarize, translate, and code.

How LLMs Process and Generate Information

LLMs are trained on massive datasets using a technique called self-supervised learning. During training, the model learns to predict the next word in a sequence.

Do that billions of times across trillions of tokens, and the model begins to internalize grammar, facts, logic, and even nuance. When you prompt an LLM, it uses that internalized knowledge combined with the context window you provide to generate a response.

The key concept to grasp here is the context window, the amount of text the model can “see” and reason over at one time. Older models had context windows of a few thousand tokens; today’s frontier models support hundreds of thousands, enabling them to process entire documents in a single pass.

Also Read – AI App Development Cost in 2025: From MVPs to Full-Scale Solutions

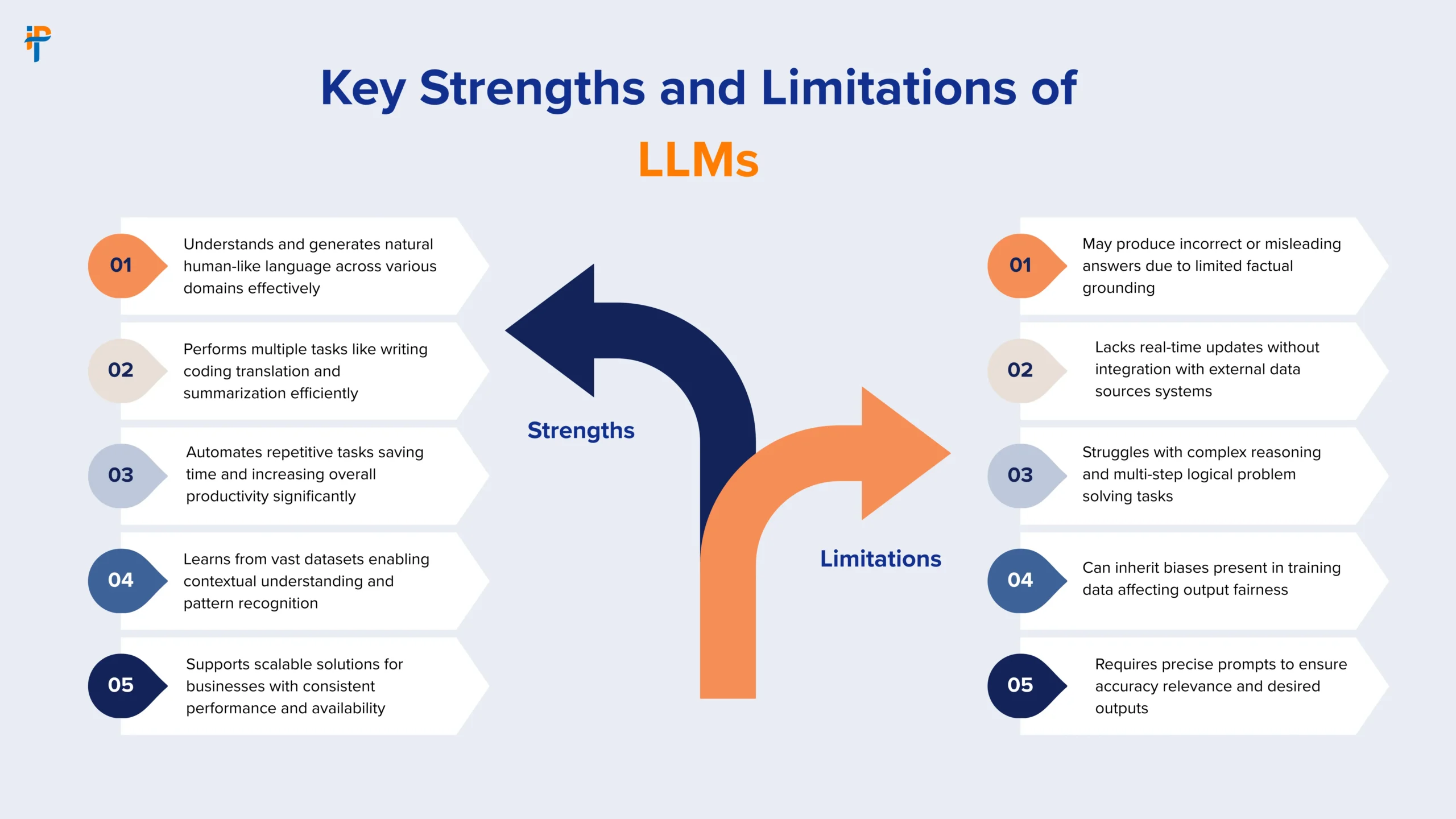

Key Strengths and Limitations of LLMs

Strengths:

- Exceptional at language understanding, generation, summarization, and translation

- Versatile across dozens of domains out of the box

- Fast inference once deployed

- Strong reasoning and coding capabilities

Limitations:

- Knowledge is frozen at a training cutoff date

- Can hallucinate — generating plausible-sounding but incorrect information

- No access to real-time or proprietary data by default

- High compute cost for training and fine-tuning

According to Stanford’s 2024 AI Index Report, LLMs have become the most commercially deployed form of AI, powering everything from customer service bots to coding assistants — but their static knowledge base remains one of their most cited enterprise limitations.

What Is Retrieval-Augmented Generation (RAG)?

Now, what if you could give that well-read entity a search engine connected to your internal documents? That’s essentially what Retrieval-Augmented Generation (RAG) does.

RAG is a technique in which an LLM’s generation capability is augmented by a retrieval mechanism that fetches relevant, up-to-date, or domain-specific information before generating a response.

Researchers at Meta AI formally introduced the concept in a landmark 2020 paper and have since made it one of the most widely adopted approaches for building production-ready AI applications.

How RAG Bridges the Gap Between Static Knowledge and Real-World Data

Here’s how a RAG pipeline works in simple terms:

- User submits a query — e.g., “What’s our refund policy for enterprise clients?”

- Retriever searches a vector database or document store for relevant chunks of text

- Retrieved content is injected into the LLM’s context window as additional context

- LLM generates a response grounded in that retrieved information

The beauty of RAG is that your knowledge base can be updated continuously — no retraining required. It’s like giving your LLM a live, searchable memory.

When Does RAG Make the Most Sense for Your Business?

RAG shines when you need:

- Domain-specific accuracy — legal, medical, financial, or technical knowledge

- Real-time or frequently updated information — product catalogs, support docs, policy changes

- Reduced hallucinations — grounding responses in source documents

- Explainability — you can point to exactly which document the answer came from

If you’re building an internal knowledge assistant, a customer support chatbot, or a document Q&A system, RAG is almost certainly part of your solution.

Also Read – RAG Use Cases 2025: Transform Mobile & Web Apps | Data-Backed Guide

What Is Agentic AI?

Here’s where things get genuinely exciting. Agentic AI refers to AI systems designed to operate with goal-directed autonomy, meaning they don’t just respond to a single prompt. Instead, they plan, reason, take actions, observe the results, and adapt their behavior to achieve a broader objective.

Think of the difference between a calculator and an accountant. A calculator responds to inputs. An accountant takes a goal — “minimize our Q4 tax liability” — and figures out the steps to get there, asking for more information when needed, making judgment calls, and adjusting strategy as new information emerges. Agentic AI is much closer to the accountant.

The Core Principles That Make Agentic AI Different

Agentic AI systems are built around a few key design principles:

- Planning: Breaking down complex goals into sub-tasks

- Memory: Maintaining context across multiple steps and interactions

- Tool Use: Calling external APIs, databases, or code executors to gather information or take actions

- Reflection: Evaluating its own outputs and self-correcting when something goes wrong

- Autonomy: Operating with minimal human intervention per step

Frameworks like LangGraph, AutoGen, and CrewAI have emerged specifically to help developers build these kinds of multi-step, autonomous AI workflows.

Real-World Use Cases of Agentic AI in Business

- Automated research pipelines, an agent that searches the web, synthesizes findings, and produces a report

- Software development agents that write, test, debug, and deploy code autonomously

- Supply chain optimization agents that monitor inventory, forecast demand, and place orders

- Customer onboarding automation — agents that handle document collection, verification, and approval workflows end-to-end

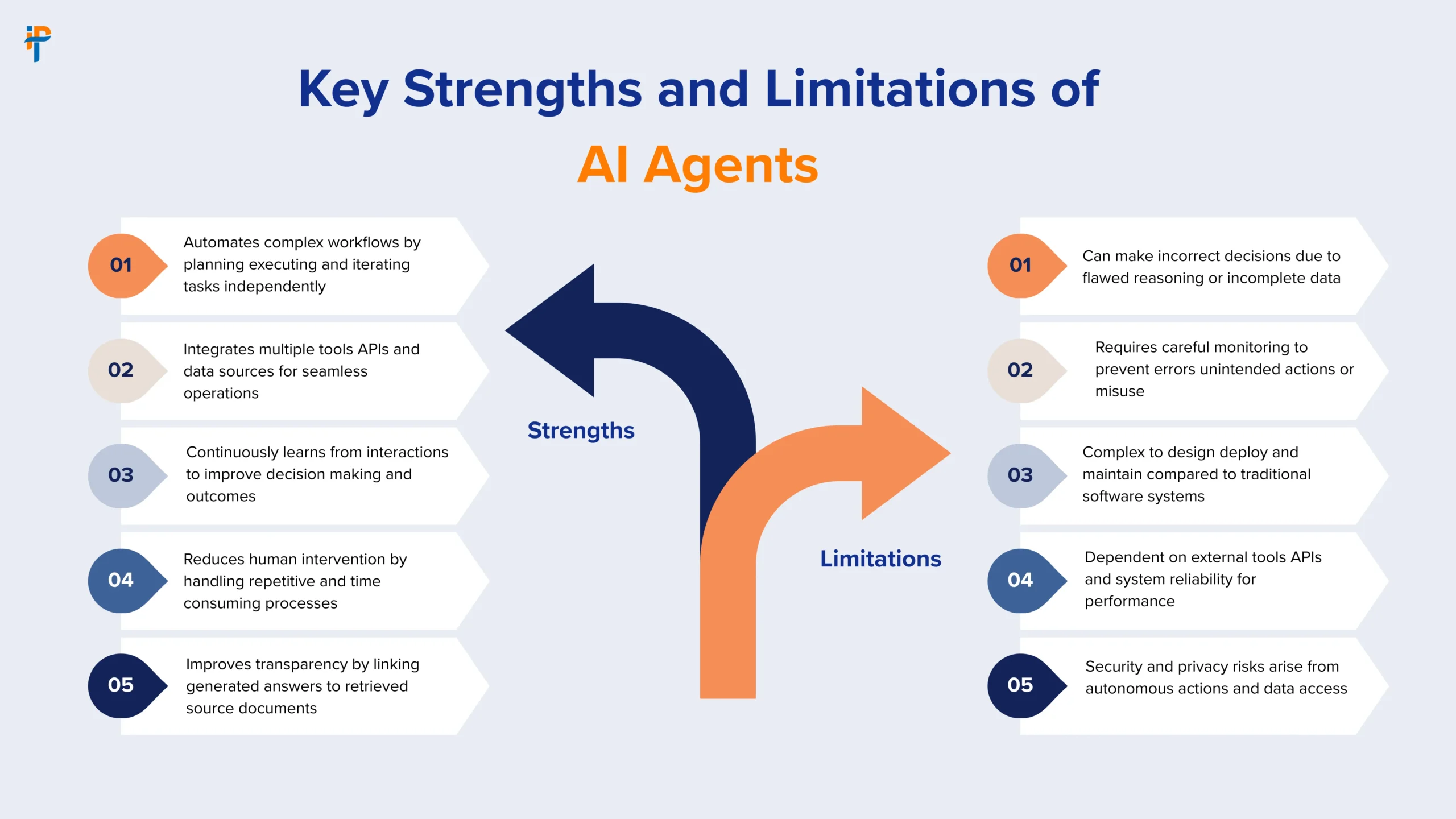

What Are AI Agents?

So if Agentic AI is the paradigm, what exactly is an AI Agent? An AI Agent is a specific, deployable implementation of that paradigm, a software entity that perceives its environment, makes decisions, and takes actions to achieve defined goals.

In practical terms, an AI agent is typically composed of:

- A core LLM (the reasoning engine)

- A set of tools (search, code execution, API calls, database access)

- Memory (short-term context + long-term storage)

- An orchestration layer (the logic that decides what to do next)

Also Read – AI Agents Explained: Why They Matter and Real-World Use Cases

AI Agents vs Agentic AI — Is There Actually a Difference?

Yes, and it’s an important distinction. Think of it this way:

- Agentic AI is the philosophy or design approach to building autonomous, goal-driven systems

- AI Agents are the concrete implementations of that philosophy

You can have an AI agent that isn’t particularly “agentic” (a single-tool, single-step agent is barely autonomous). And you can have an agentic AI system composed of multiple AI agents working together in a multi-agent architecture — each agent specializing in a different capability, collaborating to tackle complex tasks.

Types of AI Agents You Should Know About

| Agent Type | Description | Example |

|---|---|---|

| Simple Reflex Agents | React to current inputs with predefined rules | Basic FAQ chatbots |

| Model-Based Agents | Maintain an internal model of the world | Recommendation engines |

| Goal-Based Agents | Plan actions to achieve specific goals | Travel booking assistants |

| Utility-Based Agents | Optimize for maximum utility/outcome | Trading algorithms |

| Learning Agents | Improve through feedback and experience | Personalization systems |

| Multi-Agent Systems | Multiple agents collaborating on tasks | Autonomous software dev teams |

LLM vs RAG vs Agentic AI vs AI Agents: The Ultimate Side-by-Side Comparison

| Feature | LLM | RAG | Agentic AI | AI Agents |

|---|---|---|---|---|

| Core Function | Language understanding & generation | Knowledge-grounded generation | Autonomous goal execution | Task-specific autonomous action |

| Memory | Context window only | Retrieved documents | Short + long-term memory | Context + external memory |

| Knowledge Source | Training data (static) | External retrieval (dynamic) | Tools + retrieval + training | Tools + memory + training |

| Autonomy Level | None (responds to prompts) | Low (retrieves + generates) | High (plans + acts) | Medium to High |

| Tool Use | Not inherent | Retrieval tool only | Multiple tools | Multiple tools |

| Best For | Content, coding, Q&A | Domain-specific Q&A, support | Complex multi-step workflows | Specific automated tasks |

| Complexity to Build | Low–Medium | Medium | High | Medium–High |

| Cost | Medium | Medium–High | High | Medium–High |

| Hallucination Risk | High | Low–Medium | Low (with grounding) | Low–Medium |

Also Read – Build a Custom AI Agent: A Small Business Guide 2025

How These Four AI Architectures Work Together in Real Projects

Here’s a secret the AI marketing world doesn’t advertise enough: these architectures aren’t mutually exclusive. In fact, the most powerful AI applications combine all four.

Imagine a smart enterprise assistant built by IPH Technologies for a logistics company:

- LLM – provides the core reasoning engine and natural language understanding

- RAG – connects the LLM to the company’s internal policy docs, shipping tables, and client records

- AI Agents – specific agents handle tasks like querying the TMS (Transport Management System), generating invoices, and sending email updates

- Agentic AI Architecture – an orchestration layer that plans and sequences these agents to handle an end-to-end shipment exception autonomously

This kind of layered approach is what separates production-grade AI from prototype demos. It’s also exactly the kind of architecture that IPH Technologies designs and delivers for enterprise clients.

Choosing the Right AI Architecture: A Practical Decision Framework

So how do you actually choose? The answer depends on a few key variables. Let’s walk through a practical framework.

Questions to Ask Before You Pick Your AI Stack

- What problem am I solving? Is it a language task (LLM), a knowledge retrieval task (RAG), a multi-step automated workflow (Agentic AI), or a specific task automation (AI Agent)?

- How dynamic is the data? If your information changes frequently, LLM alone won’t cut it — you need RAG.

- How much autonomy do you need? If a human needs to approve every step, a simple LLM or RAG system may suffice. If you need end-to-end automation, go agentic.

- What’s your risk tolerance? Agentic systems require guardrails. The more autonomous the system, the more robust your error handling needs to be.

- What’s your timeline and budget? LLM and RAG systems can be shipped in weeks. Robust agentic systems take months of careful design and testing.

Project Complexity Matrix

| Use Case | Recommended Architecture |

|---|---|

| Chatbot for general queries | LLM (fine-tuned or prompted) |

| Internal document Q&A | RAG |

| Customer support assistant with knowledge base | RAG + LLM |

| Automated research & report generation | Agentic AI |

| Code generation & review pipeline | AI Agent (with tools) |

| End-to-end business process automation | Multi-Agent Agentic System |

| Personalized product recommendations | LLM + AI Agent + Retrieval |

| Autonomous data analysis & dashboarding | Agentic AI + AI Agents |

Also Read – How Much Does It Cost to Build an AI App in Dubai? 2026 Breakdown

Common Mistakes Businesses Make When Choosing an AI Architecture

We’ve seen patterns repeat across the industry, and some of these mistakes are expensive. Here’s what to watch out for:

- Using a sledgehammer when you need a scalpel. Not every problem needs a complex agentic system. If you just need to summarize documents, a well-prompted LLM is your best friend.

- Skipping RAG and hoping the LLM “just knows.” It doesn’t. If your use case requires proprietary, recent, or domain-specific information — implement RAG. Period.

- Building autonomous agents without guardrails. Agentic systems that operate without human-in-the-loop checks can make expensive mistakes at machine speed. Always design for graceful failure.

- Treating AI as a plug-and-play product. AI architectures require thoughtful integration with your existing data, APIs, and processes. Off-the-shelf solutions rarely deliver production-grade results.

- Ignoring latency and cost at scale. A RAG pipeline that works fine for 10 queries per day can be a financial and performance nightmare at 10,000 queries per day. Design for scale from day one.

How IPH Technologies Builds AI Solutions That Actually Deliver Results

At IPH Technologies, we don’t just talk about these architectures — we build them. With over 500 successful projects and 430+ satisfied clients, our team has hands-on experience across the full AI stack: from fine-tuning LLMs and building RAG pipelines to designing and deploying complex multi-agent agentic systems for enterprise clients.

Our approach is built on three pillars:

- Architecture-First Thinking: Before writing a single line of code, we map your business problem to the right AI architecture. This saves you months of rework and ensures scalability from the start.

- Agile + Iterative Delivery: We use agile methodologies to deliver working AI prototypes quickly, gather real feedback, and iterate toward production-grade systems — without blowing your budget.

- Full-Stack AI Expertise: From vector databases and embedding models to orchestration frameworks and LLM fine-tuning, our team speaks the full language of modern AI development.

Whether you’re exploring your first AI use case or scaling an existing AI product to enterprise, IPH Technologies is the partner that helps you move from vision to reality — faster and smarter.

The Future of AI Architecture: Where Is All This Heading?

The honest answer? We’re moving toward increasingly autonomous, multi-agent systems that can handle end-to-end workflows. According to McKinsey’s 2024 State of AI Report, 72% of organizations have now adopted AI in at least one business function, and the shift from single-model deployments to agentic architectures is accelerating rapidly.

Here’s what to watch in the next 2–3 years:

- Smaller, faster LLMs running at the edge (on-device AI)

- Standardization of agentic frameworks, we’ll see fewer custom builds and more reusable agent ecosystems

- Multimodal agents — agents that see, hear, and act across text, images, video, and code simultaneously

- Human-agent collaboration — the future isn’t AI replacing humans; it’s humans and agents working side-by-side, with agents handling the repetitive, data-heavy tasks while humans focus on strategy and creativity

The businesses that understand these architectures today are the ones that will deploy them most effectively tomorrow. And that’s a meaningful competitive edge.

Also Read – Machine Learning Mobile Apps: Boost User Experience 2026

Conclusion

Here’s the bottom line: LLMs, RAG, Agentic AI, and AI Agents are not competitors; they’re collaborators. Each represents a different layer of capability, and the best AI solutions intelligently stack these layers to solve real business problems.

LLMs give you language intelligence. RAG gives you grounded, current knowledge. Agentic AI gives you autonomous goal execution. AI Agents give you specialized, deployable task automators. Together, they form a powerful architecture that can transform the way your business operates.

The question was never which AI architecture is the best. The real question is: which combination is right for your specific problem, your data, your budget, and your team?

That’s exactly the kind of question that IPH Technologies exists to answer. With deep expertise in AI architecture, app development, and custom software engineering, we help businesses cut through the noise and build AI solutions that actually work on time, on budget, and built to scale.

Ready to build something remarkable? Talk to our team at IPH Technologies today.

.png)