What Is Multimodal AI? A Business Leader’s Guide with Real-World Use Cases in 2026

Let me ask you something. When you walk into a meeting, do you absorb information by reading text alone? Of course not. You look at the slides on the screen, listen to the presenter’s tone, glance at the body language across the table, and factor in a dozen other inputs — all at the same time.

That’s exactly what multimodal AI does. It doesn’t just read. It sees, hears, and reasons across multiple types of data simultaneously.

And in 2026, this isn’t a futuristic concept anymore — it’s the competitive edge that separates businesses that thrive from those that fall behind.

Whether you’re a startup founder, a CTO, or a business unit head trying to make sense of the AI landscape, this guide is for you.

We’ll break down what multimodal AI really is, why it matters right now, and show you real-world use cases across industries so you can make smarter decisions for your organization.

The Quick Answer: What Exactly Is Multimodal AI?

Multimodal AI is an artificial intelligence system that can process, understand, and generate content across multiple types of input — or “modalities” — such as text, images, audio, video, and even structured sensor data. Unlike a standard chatbot that only reads what you type, a multimodal AI model can simultaneously analyze an image you upload, understand your spoken question, and generate a relevant written or visual response.

Think of it like the difference between a musician who can only play the piano versus a full orchestra.

A traditional, unimodal AI model plays one instrument. A multimodal AI model conducts the whole ensemble.

How Multimodal AI Differs from Traditional AI

Traditional AI models are built for a single lane — they’re trained on one type of data and perform tasks within that narrow scope.

A text model processes text. An image classifier processes images. These models are powerful in isolation, but the real world doesn’t work in isolation.

Multimodal AI bridges these silos. It fuses information from different sources and creates a richer, more contextually accurate understanding of the world. Instead of duct-taping three separate APIs together and hoping they play nice, a multimodal system handles everything natively — reducing pipeline complexity, minimizing errors at data handoff points, and delivering faster, more coherent outputs.

The Key Modalities Explained

When we talk about “modalities,” we mean the different types of sensory or data inputs an AI system can handle:

- Text — The foundational layer. Natural language understanding, document analysis, and summarization.

- Images — Visual recognition, medical scans, product photos, satellite imagery.

- Audio — Speech recognition, tone analysis, music generation, and call center transcription.

- Video — Real-time surveillance, sports analytics, training data review, and autonomous driving.

- Structured data — Sensor readings, financial data, IoT telemetry, spreadsheets.

The magic happens at the intersection of these modalities, where the AI doesn’t just process each input independently but reasons across all of them together.

Also Read – LLM vs RAG vs Agentic AI vs AI Agents: Which AI Architecture Is Right for Your Next Project?

Why Multimodal AI Is the Biggest Business Shift of 2026

If you’re still on the fence about whether multimodal AI warrants your attention, the market data should settle that debate quickly.

Market Size and Growth You Can’t Ignore

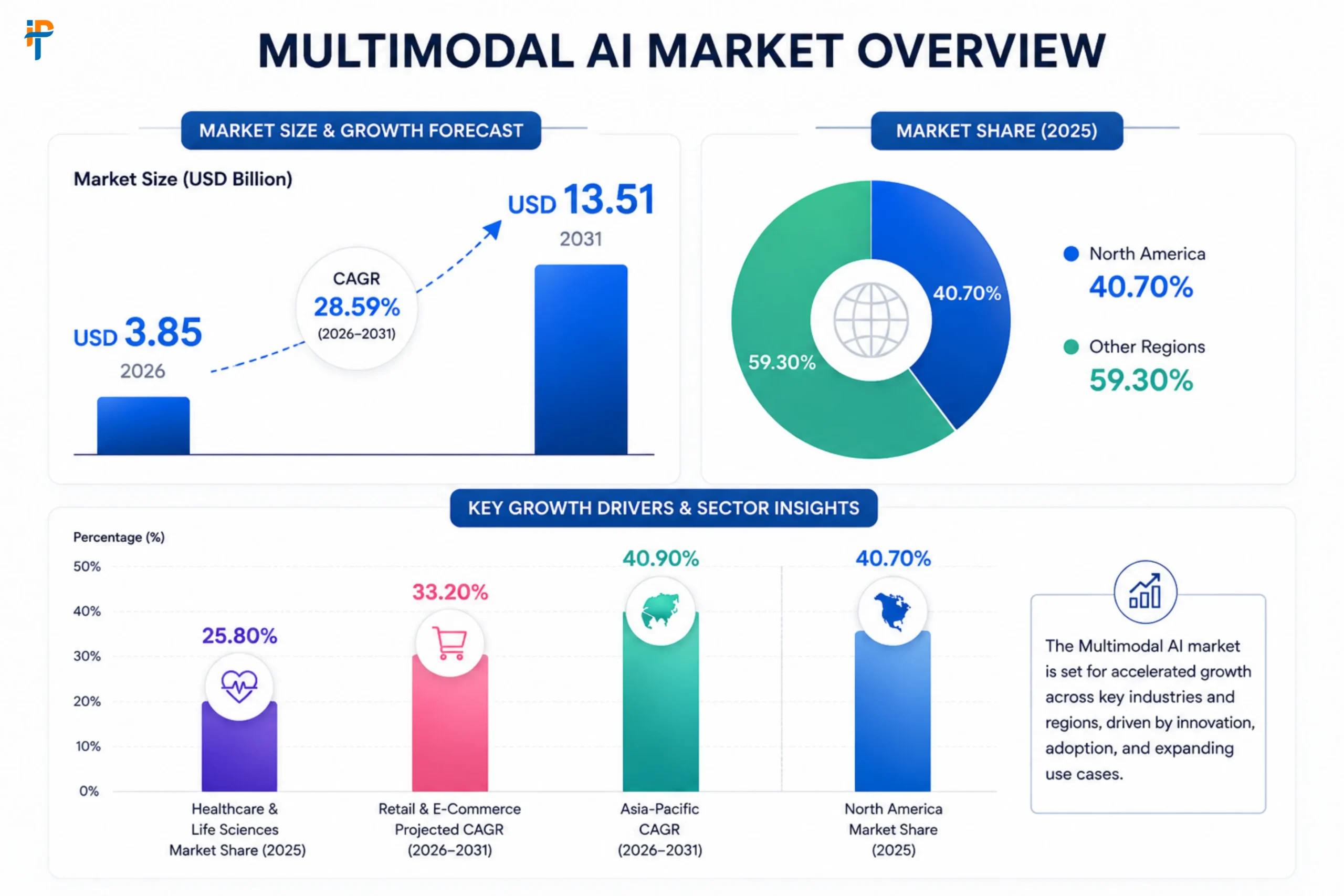

According to Mordor Intelligence, the multimodal AI market stood at approximately USD 3.85 billion in 2026 and is projected to reach USD 13.51 billion by 2031, growing at a CAGR of 28.59% over that period.

For context, that’s an industry roughly quadrupling in size in under five years.

Even more striking, earlier projections from Grand View Research put the market’s longer-term trajectory at $10.89 billion by 2030, driven by a CAGR of approximately 36.8%.

Multiple research firms triangulate around the same explosive growth story — this technology is not a niche experiment. It’s a full-scale commercial wave.

| Metric | Value |

|---|---|

| Multimodal AI Market Size (2026) | USD 3.85 Billion |

| Projected Market Size (2031) | USD 13.51 Billion |

| CAGR (2026–2031) | 28.59% |

| Healthcare & Life Sciences Market Share (2025) | 25.80% |

| Retail & E-Commerce Projected CAGR (2026–2031) | 33.20% |

| Asia-Pacific CAGR (2026–2031) | 40.90% |

| North America Market Share (2025) | 40.70% |

Source: Mordor Intelligence Multimodal AI Market Report

And it’s not just a market research story. Major tech giants like Meta, Amazon, Alphabet, and Microsoft are collectively planning to allocate up to $320 billion in AI-related capital expenditure, much of it directed toward multimodal capabilities. When the biggest companies in the world bet this heavily, it’s worth paying attention.

From Pilot Projects to Full-Scale Deployment

Here’s what’s genuinely fascinating about the AI landscape in 2026: we’ve moved from experimentation into execution. According to McKinsey, AI adoption across organizations grew from 50% in 2022 to 88% in 2025.

Capgemini’s research shows the GenAI deployment rate nearly doubled — from 20% in 2024 to 36% in 2025. The share of companies still in “pilot mode” dropped from 39% to just 13%, which means the industry has firmly crossed into full-scale implementation.

That shift from experiment to execution is precisely where multimodal AI gains its competitive significance.

Businesses that move now build institutional knowledge and workflow efficiency that early-mover advantages compound over time.

Also Read – How AI & Machine Learning Are Transforming Business Automation 2026

Core Technologies Powering Multimodal AI

Understanding what’s under the hood helps you make smarter technology decisions. Multimodal AI is an orchestra of several established and emerging technical disciplines.

Natural Language Processing (NLP)

NLP is the linguistic backbone — it allows AI to understand and generate human language with nuance, context, and intent. Modern NLP systems don’t just match keywords; they reason about meaning, sentiment, and implication.

This is the layer that powers everything from customer service chatbots to contract analysis tools.

Computer Vision

Computer vision enables AI to “see.” It can identify objects, read text in images, detect anomalies on factory floors, analyze medical imagery, and interpret satellite photos.

Paired with NLP, computer vision transforms how AI interprets the visual world and communicates that interpretation in human-readable language.

Speech Recognition and Audio Processing

Audio modality goes beyond transcription. Advanced speech AI can detect speaker emotion, identify voice patterns for authentication, parse multilingual conversations in real time, and even generate music from text descriptions.

For businesses, this unlocks everything from intelligent call center analysis to hands-free equipment operation in industrial environments.

Sensor Fusion and Cross-Modal Reasoning

This is the frontier that excites engineers most. Sensor fusion is the ability to combine data streams from cameras, LiDAR, IoT sensors, GPS, and environmental monitors into a unified situational picture.

In autonomous vehicles, it’s what keeps the car in its lane. In smart manufacturing, it’s what predicts equipment failure before it happens.

Cross-modal reasoning is the AI’s ability to draw insights that span multiple modalities at once — seeing a crack in a pipe through camera footage while correlating it with pressure sensor anomalies.

Also Read – RAG Use Cases: Transform Mobile & Web Apps | Data-Backed Guide

Real-World Multimodal AI Use Cases by Industry

Let’s get concrete. Here’s where multimodal AI is already creating measurable business value across industries.

Healthcare — Smarter Diagnostics, Faster Decisions

Multimodal AI’s impact in healthcare is profound and growing fast, accounting for nearly 25.80% of the global market share in 2025. These systems pull from patient notes, past medical records, electronic health records, medical imaging, and genomic data simultaneously.

The combined analysis identifies patterns that no single data stream could reveal on its own.

The practical result? Faster, more accurate diagnosis. Imagine an oncology decision support system that cross-references a patient’s MRI scan, their genetic markers, and a global database of similar cases to recommend a treatment pathway in minutes rather than days.

Healthcare providers are already deploying diagnostic systems that unify radiology scans with electronic records for higher accuracy in oncology support — and the results speak for themselves.

Multimodal AI is also transforming surgical assistance, drug discovery pipelines, and remote patient monitoring, where wearables generate continuous streams of audio, biometric, and video data that the AI synthesizes into actionable clinical alerts.

Retail and E-Commerce — The Smart Shopping Revolution

Retail is arguably where consumers feel the impact of multimodal AI most directly. Visual search is now mainstream — shoppers photograph a product on the street and find it instantly online.

Smart-shelf monitoring fuses video feeds with inventory data to eliminate stockouts in real time. AI-driven personalization engines analyze browsing behavior, voice queries, and purchase history to serve tailored recommendations across channels.

Real-time video analysis in retail is growing at a 39.80% CAGR, driven by live-stream commerce and social platforms injecting terabytes of video per second into enterprise workflows.

Captioning, content moderation, and shoppable video generation are all benefiting from multimodal capabilities.

One standout example: Zenpli, a digital identity company, used multimodal AI via Google’s Vertex AI to achieve a 90% faster onboarding process and a 50% reduction in costs through AI-powered document and identity verification. That’s not a research benchmark — that’s a live production deployment.

Manufacturing — Eyes on the Factory Floor

Manufacturing is one of the sectors where multimodal AI creates the most immediate ROI. According to Mordor Intelligence, 87% of manufacturers are currently running generative AI pilots to improve visual inspection and predictive maintenance in production lines.

Imagine an AI system that simultaneously watches a conveyor belt through multiple cameras, monitors vibration sensors on rotating equipment, and cross-references thermal imaging with historical failure data — all in real time.

When it detects an anomaly pattern consistent with bearing wear, it schedules a maintenance window before the machine fails, saving six figures in unplanned downtime.

Energy producers are using a similar model, combining drone footage with sensor telemetry for remote infrastructure inspection in locations that are too dangerous or expensive for human teams to visit regularly.

Finance — Fraud Detection Gets a Superpower

In financial services, multimodal AI is rewriting the fraud detection playbook.

Traditional rule-based fraud systems can only act on what they’ve seen before. Multimodal systems correlate transaction data, behavioral biometrics (how a user types or moves a mouse), voice authentication patterns during phone calls, and facial recognition during video verification sessions — all simultaneously.

The result is a fraud detection system that understands context, not just patterns. A transaction from an unusual location might normally trigger a flag, but if the AI can simultaneously verify the customer’s voice during a quick call and confirm their behavioral fingerprint on the app, the false positive rate drops dramatically — improving both security and customer experience in one move.

Figure, a fintech company offering home equity lines of credit, uses multimodal AI to power chatbots that streamline the entire lending process for both consumers and employees — simplifying what was once a complex, document-heavy experience into a conversational, guided journey.

Education — Learning That Actually Engages

Education technology has been transformed by multimodal AI’s ability to create personalized, multi-sensory learning experiences.

Platforms like Khan Academy Kids and Duolingo have long combined visuals, audio, and structured prompts to guide learning — multimodal AI takes this further by adapting difficulty, modality, and pacing to each learner’s real-time responses.

Think of it as having a private tutor who notices when you’re confused (from facial expression analysis), adjusts the explanation to a different modality (switching from text to a visual diagram), and slows down if your response times suggest you’re struggling — all without any explicit input from you.

For corporate learning and development teams, this means training programs that measurably reduce time-to-competency and improve retention rates compared to traditional e-learning modules.

Hospitality and Travel — Personalized at Scale

Hilton’s AI-powered robot concierge, “Connie,” combines natural language processing with physical interaction to answer guest questions naturally.

That’s one visible example of a broader trend: hospitality businesses using multimodal AI to analyze everything from guest reviews and booking histories to sentiment during check-in calls, enabling hyper-personalized experiences at scale.

Predictive maintenance in hotels is another quiet but high-value use case. Multimodal AI combines sensor data from HVAC systems, kitchen equipment, and building infrastructure to predict failures before guests ever notice a problem — protecting the brand experience while reducing emergency repair costs.

Also Read – AI App Development Cost: From MVPs to Full-Scale Solutions

Multimodal AI vs. Unimodal AI: Side-by-Side Comparison

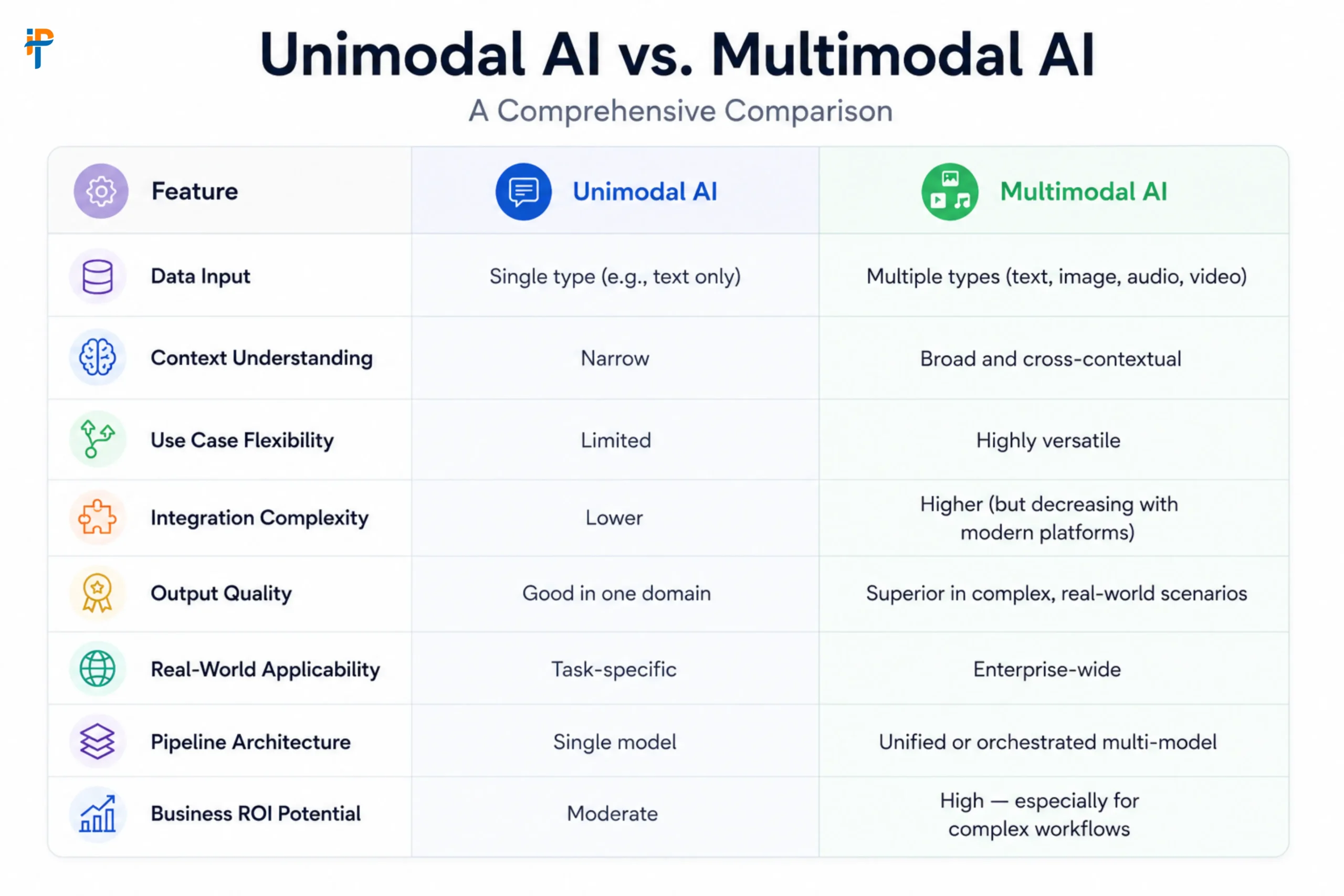

| Feature | Unimodal AI | Multimodal AI |

|---|---|---|

| Data Input | Single type (e.g., text only) | Multiple types (text, image, audio, video) |

| Context Understanding | Narrow | Broad and cross-contextual |

| Use Case Flexibility | Limited | Highly versatile |

| Integration Complexity | Lower | Higher (but decreasing with modern platforms) |

| Output Quality | Good in one domain | Superior in complex, real-world scenarios |

| Real-World Applicability | Task-specific | Enterprise-wide |

| Pipeline Architecture | Single model | Unified or orchestrated multi-model |

| Business ROI Potential | Moderate | High — especially for complex workflows |

Top Multimodal AI Models Business Leaders Should Know in 2026

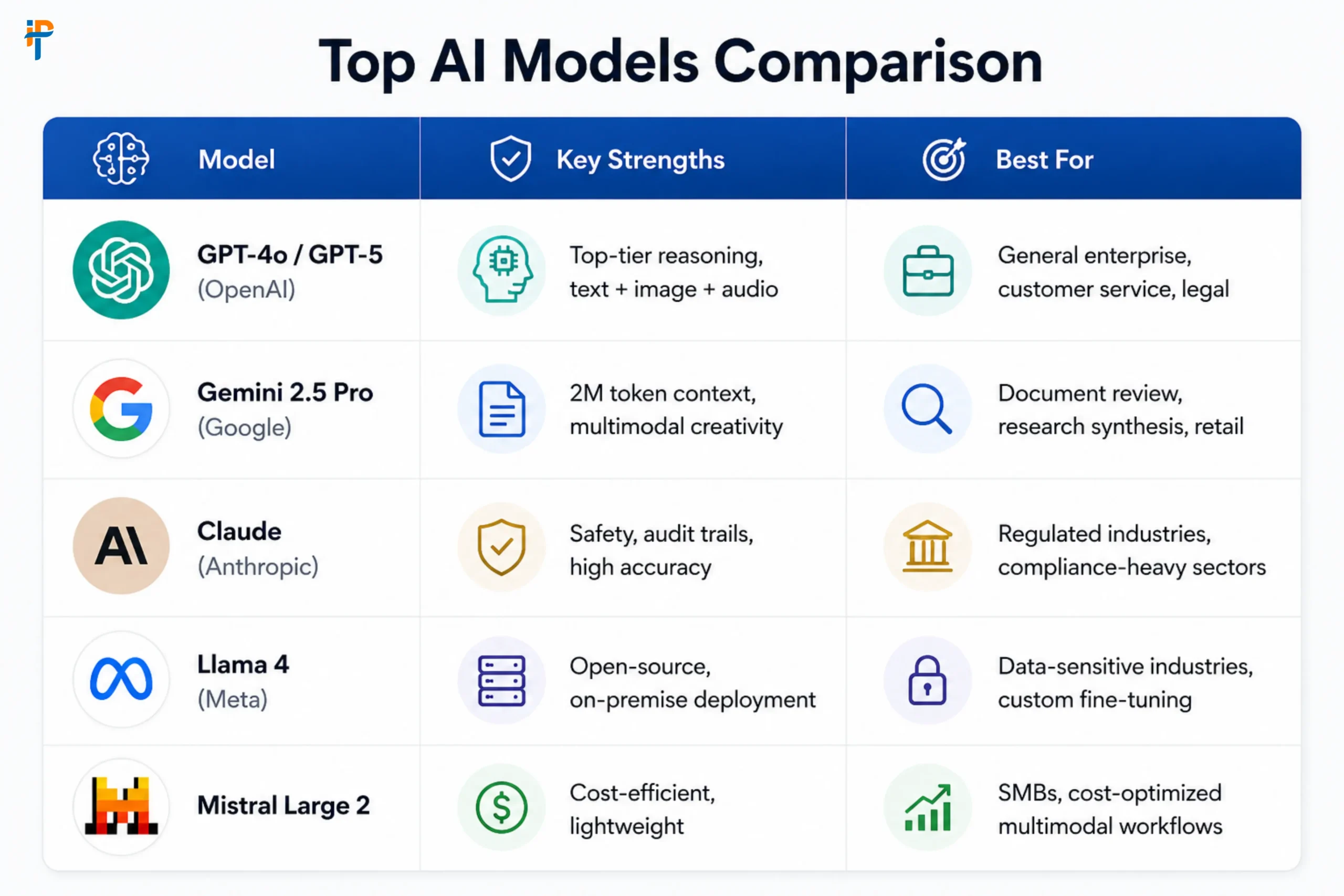

| Model | Key Strengths | Best For |

|---|---|---|

| GPT-4o / GPT-5 (OpenAI) | Top-tier reasoning, text + image + audio | General enterprise, customer service, legal |

| Gemini 2.5 Pro (Google) | 2M token context, multimodal creativity | Document review, research synthesis, retail |

| Claude (Anthropic) | Safety, audit trails, and high accuracy | Regulated industries, compliance-heavy sectors |

| Llama 4 (Meta) | Open-source, on-premise deployment | Data-sensitive industries, custom fine-tuning |

| Mistral Large 2 | Cost-efficient, lightweight | SMBs, cost-optimized multimodal workflows |

Note: Model capabilities evolve rapidly. Always validate with production data for your specific use case.

How to Implement Multimodal AI in Your Business — A Practical Roadmap

So you’re convinced the technology matters. Now what? Here’s a pragmatic three-step framework to move from interest to implementation.

Step 1 — Identify High-Impact Use Cases

Don’t start with the technology. Start with your business problems.

Where does your team currently waste hours processing multiple types of data manually? Where do human reviewers struggle to synthesize visual and textual information quickly? Where is slow decision-making costing you money?

Common high-ROI entry points include customer support automation (voice + text + image), quality control in manufacturing (video + sensor data), document processing in finance or legal (text + image in PDFs), and personalized marketing (behavioral + visual + transactional data).

Prioritize based on two dimensions: business value if solved, and feasibility given your existing data infrastructure.

Step 2 — Choose the Right Model and Partner

The model decision depends on your industry, data sensitivity, and performance requirements. Regulated industries like healthcare and finance should lean toward models with strong audit trails (like Claude) or on-premise deployment options (like Llama).

Companies needing large-scale document analysis benefit from Gemini’s extended context window. Cost-sensitive SMBs might find Mistral or fine-tuned open-source models more appropriate.

Equally important is your implementation partner. Multimodal AI systems require expertise across data engineering, API integration, model fine-tuning, and ethical governance.

This is not a plug-and-play tool — it’s an engineering initiative.

Step 3 — Build, Integrate, and Iterate

Start with a focused pilot on your highest-priority use case. Define clear success metrics before you begin — not vanity metrics like “the AI responded correctly 80% of the time,” but business metrics like “customer onboarding time reduced by X hours” or “quality defect rate dropped by Y%.”

Once your pilot demonstrates value, integrate the system into your existing workflows via APIs or middleware. Then iterate — multimodal AI systems improve with more data and feedback loops. The companies getting the best results aren’t those who deployed once; they’re the ones who treat AI as a living system they continuously refine.

Also Read – How AI Is Revolutionizing Bullion Software Development in 2026

Challenges of Multimodal AI Adoption (And How to Overcome Them)

Let’s be realistic. Multimodal AI is powerful, but it comes with genuine implementation challenges. Knowing them up front helps you plan around them.

Data Complexity and Integration

Fusing data from multiple modalities — photos, sensor readings, text documents, audio transcripts — is technically complex. Each data type has different formats, quality standards, and preprocessing requirements.

Building robust data pipelines that normalize these inputs and feed them into a unified model requires significant engineering effort.

The practical solution is to leverage cloud-based AI platforms (Google Vertex AI, AWS SageMaker, Azure AI) that provide pre-built connectors, data preprocessing tools, and model serving infrastructure. These platforms dramatically reduce the time-to-deployment for enterprise teams.

Computational Cost

Multimodal models are larger and more computationally intensive than their unimodal counterparts.

Running them at scale can become expensive quickly — particularly for real-time video analysis or continuous sensor monitoring.

The key is smart architecture: use edge AI for latency-sensitive, low-power tasks (like quality control cameras on a factory floor) and cloud-based inference for complex, intermittent tasks (like monthly financial report analysis). Don’t run a sledgehammer where a scalpel will do.

Ethical Governance and Bias

Multimodal AI introduces new dimensions of bias risk. Facial recognition systems can perpetuate racial biases.

Audio analysis tools can be less accurate for certain accents or voice types. When multiple modalities are fused, biases can compound in unexpected ways.

Responsible implementation requires pre-deployment fairness audits, diverse training data, continuous monitoring post-launch, and clear human-override protocols.

Regulatory milestones like the EU AI Act are formalizing these requirements, particularly for high-risk applications in healthcare, finance, and public safety.

Why IPH Technologies Is Your Ideal Multimodal AI Partner

At IPH Technologies, we’ve spent years turning visionary ideas into production-grade solutions. With over 500 successful projects and 430+ satisfied clients, we know what separates AI demos from AI that actually moves business metrics.

Our team brings deep expertise across mobile app development, web applications, custom software engineering, and AI integration. We understand that implementing multimodal AI isn’t just a technical project — it’s a business transformation initiative that requires careful alignment with your processes, your team, and your long-term goals.

When you work with us, you’re not just getting developers. You’re getting a partner who helps you identify the right use cases, choose the right technology stack, build scalable integrations, and iterate toward measurable outcomes.

We bring agile methodologies, a track record of on-time delivery, and a genuine commitment to exceeding expectations — not just meeting project specs.

Whether you’re looking to build a healthcare diagnostic assistant, a retail personalization engine, a manufacturing quality control system, or a finance fraud detection platform, IPH Technologies has the expertise, the process, and the passion to make it happen.

Conclusion

Multimodal AI isn’t the AI of tomorrow — it’s the AI of right now.

With a market growing at nearly 30% annually, real-world deployments delivering 50–90% efficiency improvements across industries, and the world’s largest technology companies betting hundreds of billions on its future, the business case for multimodal AI has never been clearer.

The question isn’t whether to adopt it. It’s how fast you can move and how well you can execute.

Businesses that understand multimodal AI’s capabilities, identify the right use cases, and partner with experienced implementation teams will compound those advantages over time. Those who wait risk inheriting a competitive disadvantage that gets harder to close with every quarter.

If you’re ready to explore what multimodal AI can do for your specific business, the team at IPH Technologies is ready to help you chart that path — step by step, use case by use case, result by result.

.png)